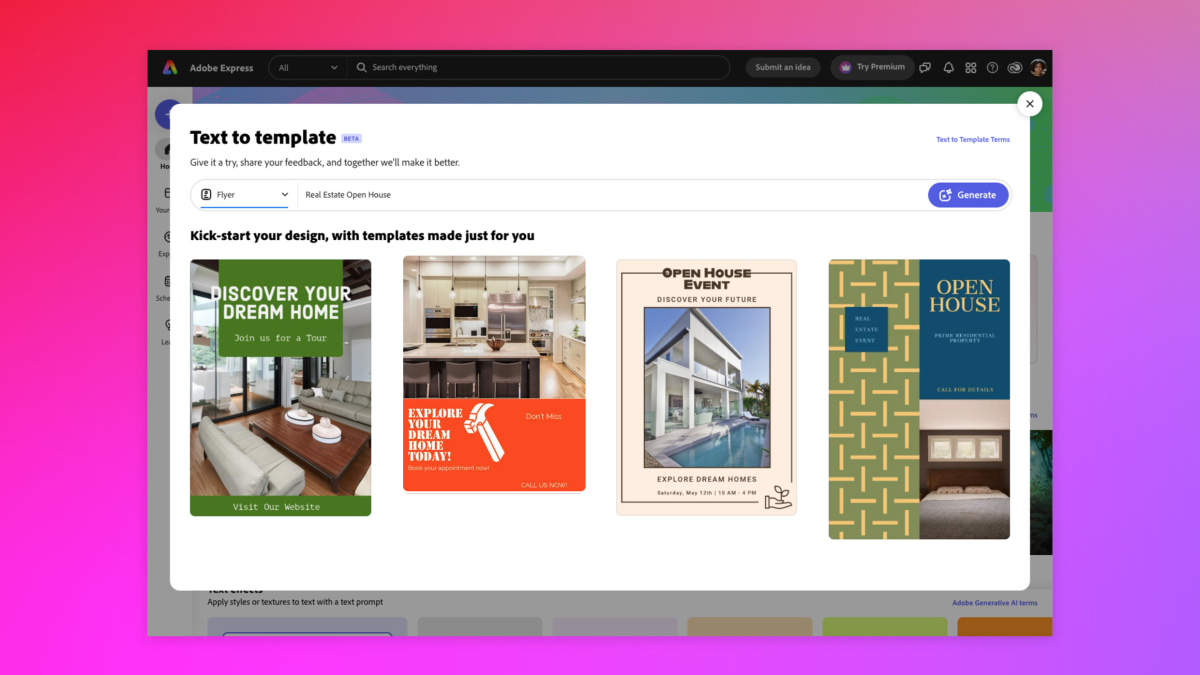

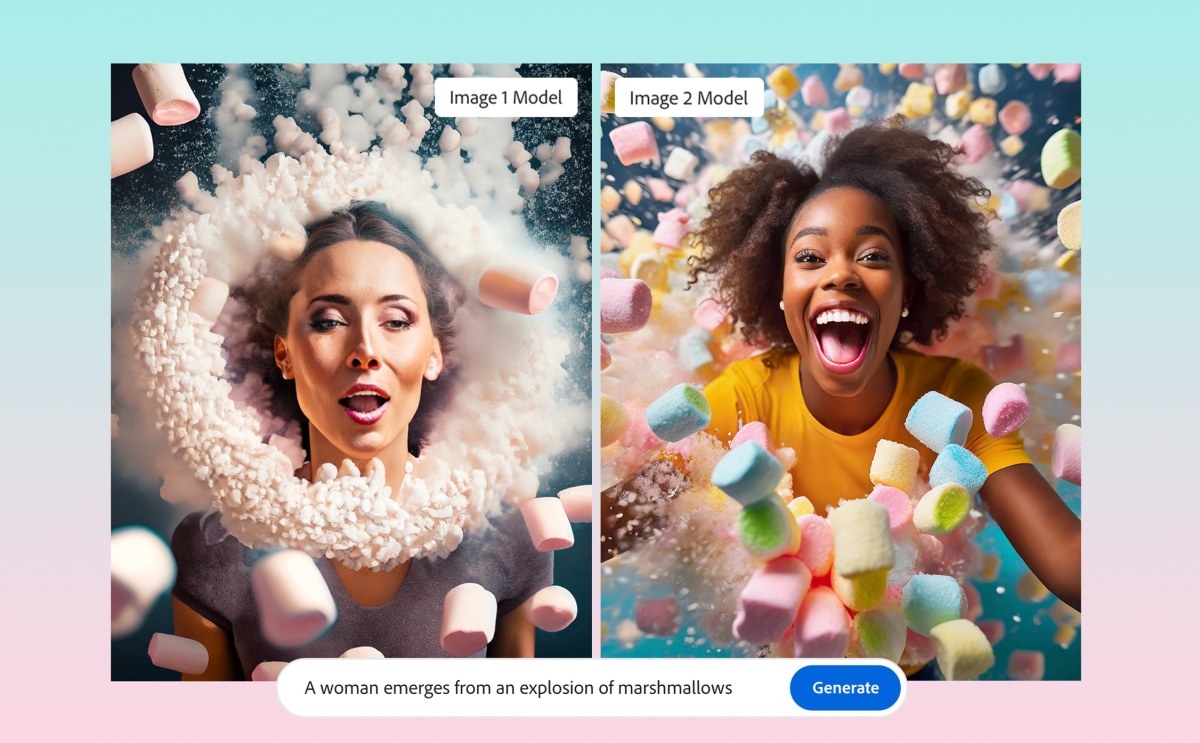

Brand guides aren’t just for humans anymore. Today, Adobe announced the Image 2 Model of its artificial intelligence tool Firefly at the Adobe MAX keynote, and among the updates are fully editable vector image generation and a feature that stylizes AI-created images to match a specific aesthetic.

With the new features, Adobe is positioning AI as a helpful assistant who can take over the most tedious of design tasks. For example, with Generative Match, a brand’s pre-existing assets can become data points to train an AI model that can generate branded content variants on demand.

An example shared by Adobe showed a pastel textured ball and a colorfully lit image of someone listening to a music being used to generate two variant images of sloths that mirrored their parent images in style, color, and lighting And with Text to Vector in Adobe Illustrator, designers can generate vector graphics with a prompt.

If today’s content glut and the fracturing of distribution channels represents a challenge for marketers in capturing eyeballs and attention—a “content supply chain problem,” as Adobe’s vice president of generative AI, Alexandru Costin, calls it—the company offers a solution: more content, faster.

“This has amazing implications in increasing the productivity of the individual creative professionals, but also increasing the productivity of enterprise creative departments as they go and they have to create and increase the content velocity,” he says.

People have generated more than 3 billion images through Adobe Firefly since it was announced earlier this year, and Photoshop’s generative fill feature has been adopted at a higher rate than other popular features, according to the company. AI moves fast, and Tuesday’s updates come on the heels of competitors beefing up their own AI offerings.

The tools in Canva’s Magic Studio, for example, are capable of auto-formatting content for different platforms and translating text into multiple languages with a click, and while Adobe offers many of the same features as its competitors, the company hopes its differentiated tools will set it apart.

Adobe touts its generative AI vector graphics capability as an industry first and imagines it being used for marketing and ad graphic creation, ideation, and mood boards. High-quality, fully editable vector images are made to match existing brand styles through Generative Match, while features like Mockup allow for quick, realistic product staging (drag a graphic onto an image of a can, for example, and it automatically wraps around it) and Retype allows for fonts in a graphic to be identified and edited.

Project Stardust, Adobe’s new smart-editing engine, could make carving out images to move with the Lasso tool a thing of the past. It analyzes images for distinct, editable objects, and takes out the burden of complex editing. In a demo, Adobe showed how a suitcase could be moved in an image by clicking and dragging it.

The suitcase’s shadow came along with it while the space the suitcase previously occupied was automatically backfilled with matching pavement and texture. Adobe says Project Stardust will make image editing more accessible and time efficient for users of any skill level.

“Our mantra is being able to edit as if it were real,” says Mark Nicholson, the project’s director of product management. “We want to be able to transcend beyond the pixels and layers and move towards this paradigm of object-based editing.”

Adobe also made improvements to its image generation engine, which is trained on its own stock image library. While the model allows Adobe to avoid messy copyright issues faced by other text-to-image generators trained more broadly, the downside is images look like stock photos.

Under the Firefly Image 2 Model, though, generative images look less stock-like thanks to additional inputs including human feedback and the company’s “own private data set,” Costin says.

“We’re driving towards photographic quality of the output,” he says.

If mastering Adobe programs like Photoshop is the graphic design equivalent of learning guitar, Firefly might be compared to Guitar Hero, simplifying skills learned by a generation of digital graphic designers down to a series of prompts and buttons.

That’s good news for novices or, say, small business owners with graphic design needs bigger than their budgets, but professional designers may find it threatening. What’s the value of shredding if you can bang out Hendricks or Clapton in five buttons or less?

Costin views AI not as a competitor to human designers, but a copilot, amplifying and democratizing creativity. Its tools will allow its customers “to spend more time thinking about the emotions being sent in a particular ad or in a particular story that’s being told versus the pixels that have to be assembled to tell that emotion,” he says.

An artificial guitar solo might sound convincing, but it can’t completely capture the humanity of the real thing, and perhaps AI represents an entirely new kind of guitar. Design software is adapting to novel user interface elements, like the prompt, and who said being a good designer meant you have to be handy with the Lasso tool anyways?

Adobe’s rolling out prompt auto-completion to help customers describe what they want to a computer and it’s borrowing from camera settings for some of its generative editing controls, like depth of field, motion blur, and field of view.

Costin compared AI to past technological developments like digital publishing, digital photography, and mobile. They all reduced the cost of producing content and increased the need for more of it, he said, and those who embrace new technology will stay relevant.

“This technology will change how content is created,” he says.

This article was first published on Fast Company

MARKETING Magazine is not responsible for the content of external sites.